Enhancing Fairness: How AI-Driven Assessments Reduce Hiring Bias

Reducing Bias in Hiring: How AI-Driven Assessments Improve Fairness and Accuracy

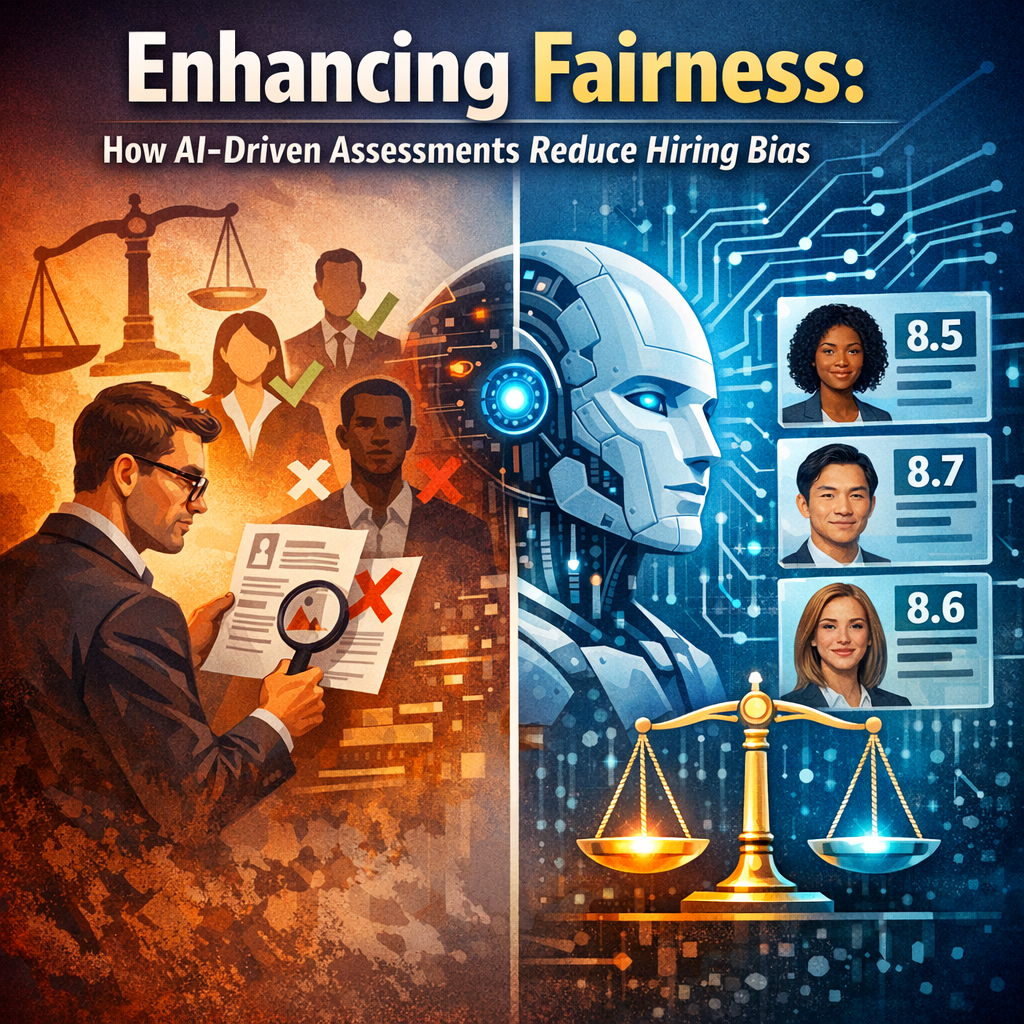

The push for more equitable and unbiased hiring practices has been at the forefront of the corporate world in recent years. Traditional hiring methods, often fraught with unconscious biases, have prompted many organizations to turn towards technological solutions. Among these, AI-driven assessments stand out as a powerful tool to enhance both fairness and accuracy in the recruitment process.

Understanding Bias in Traditional Hiring

Before delving into how AI can help, it’s important to recognize the types of biases commonly found in traditional hiring processes. These biases can broadly be categorized into:

- Confirmation Bias: Where interviewers seek information that confirms their preconceived notions about a candidate.

- Affinity Bias: Occurs when employers favor candidates who share similar backgrounds or interests.

- Perception Bias: Leads to forming stereotypes about abilities based on factors like gender, race, or age.

These biases often lead to skewed and unfair hiring decisions, not based solely on an applicant’s ability or potential to perform in a role.

The Role of AI in Enhancing Hiring Fairness

AI-driven assessments are designed to minimize these biases by providing a more standardized approach to evaluating candidates. Through the following mechanisms, AI can drastically change how candidates are assessed:

Data-Driven Decisions

AI tools use large data sets to make decisions. By designing algorithms that focus strictly on skills and experiences relevant to the job, these tools can objectively evaluate candidates without human prejudices influencing the results.

Consistent Interview Processes

AI-driven platforms can conduct initial screening interviews where all candidates answer the same questions in the same order. This standardization ensures that no candidate gets an advantage or disadvantage due to variations in how interviews are conducted.

Blinded Recruitment Methods

Some AI systems anonymize candidate details that are irrelevant to the job but can be sources of bias (like names, gender, ethnic background, etc.). By focusing solely on the credentials and answers provided by applicants, these systems promote a more equitable evaluation process.

Examples of AI Tools Improving Recruitment

Several innovative AI tools have made notable strides in reducing bias:

- Pymetrics: Utilizes neuroscience-based games to assess candidates’ cognitive and emotional traits. It strives to recommend candidates based on potential rather than past job titles or education levels.

- HireVue: Provides AI-driven video interviewing solutions that analyze verbal and non-verbal communication cues, offering insights that go beyond what might be perceived from a traditional interview.

- GapJumpers: Implements a “blind audition” process, where candidates perform job-specific tasks without revealing their identities. This focuses the selection process on performance and skill.

The Future of AI in Hiring

As AI technology continues to evolve, its potential to further enhance hiring fairness and accuracy grows. Innovations in machine learning can refine algorithms to be even more unbiased, while developments in Natural Language Processing (NLP) can improve the analysis of interviews and written materials.

However, it’s crucial for AI developers and implementing organizations to continually monitor and audit these systems. Ensuring AI assessments remain free from the biases they are meant to eliminate is vital for their continued effectiveness.

Challenges and Considerations

Despite the benefits, implementing AI in hiring does pose some challenges:

- Algorithmic Transparency: It’s essential that the criteria and decision-making processes of AI tools are transparent to maintain trust among candidates.

- Regular Updates: AI algorithms can develop biases over time if not regularly updated or if they learn from biased data sets.

- Legal and Ethical Implications: Organizations must navigate the complex legal landscape around automated decision-making to ensure compliance with employment laws.

Conclusion

The integration of AI-driven assessments in hiring practices offers promising solutions to long-standing biases. By automating parts of the recruitment process and ensuring a focus on essential job skills and qualifications, AI can lead to more fair, objective, and accurate hiring outcomes. As this technology continues to develop, its role in fostering more inclusive workplaces is likely to expand, reinforcing its value across various sectors of the corporate world.